Now let’s create the Synapse Workspace linking the storage account and the container that will be used:Īt this point you should have the following resources created:

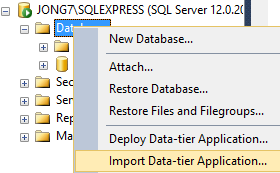

With our data saved, now we’ll create a Synapse Workspace to consume the parquet file, but first let’s add the resource provider for the synapse service into our subscription: We can also take a look at the pipeline created, if we need to add a table or remove from the routine, it can be done from here:Īccessing our container, all data copied will be there: Then we’ll see the summary of all operations:ĭeployment completed, now we can monitor our pipeline and see the results: Next, we have to configure: task name and logging options: We have some options to choose, I’ll use Parquet format and snappy compression. Then let’s select our container created earlier: Now, let’s set up our target destination, clicking on new connection: Once connected you’ll see a list of all tables in the database, you can choose multiple tables to export or create a query to export specific data, in this lab we’re gonna use a query to get a subset of records from the Posts table. If our connection was successful then we can click create Here we need to click on New connection and choose our self-hosted in connect via integration runtime It’ll open the copy data tool, for now we’ll use the “Built-in copy task” with “Run once now” to run a single load. Returning to the portal we can see our integration runtime: Then finally our on-premises server is registered and connected with Azure Data Factory: Once installed, it’ll ask for the authentication key from the previous step: Then you’re gonna need to download and install the integration runtime in the SQL Server host. The steps to configure Integration Runtime are the following:Īzure Portal → Data Factory → Manage → Integration Runtimes -> New Now we’re gonna need to configure a self-hosted integration runtime. We’ll use Azure Data Factory to create a pipeline to transfer data from our local SQL Server to the Azure Data Lake.įor this to work we need to set up a connection to our on-premises SQL, so it can be used as a data source, this way we avoid setting up a site to site connection or a VPN. To be able to store our data, first we need to create a Storage Account in Azure portal.Īfter that we need to create a container where our data will be stored, I created one named dataloc:Īlso, we need to enable Data Lake Gen2, but first let’s disable ENABLE SOFT DELETE FOR BLOBS, without disabling it we’ll receive an error when upgrading to Gen2 The database to be used for this lab it’s the open source data export from Stack Overflow (2010 version) in a 2019 SQL Server instance (developer edition). +++ Azure Synapse Analytics 1 – Source database +++ Azure Data Lake Gen2 Container to storage our data +++ On-premises SQL Server with a StackOverflow database installed. The idea for this lab was to create a simple BI environment in Azure using a local SQL Server sending data to a Data Lake through Azure Data Factory For this to be possible we’re going to use the following resources:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed